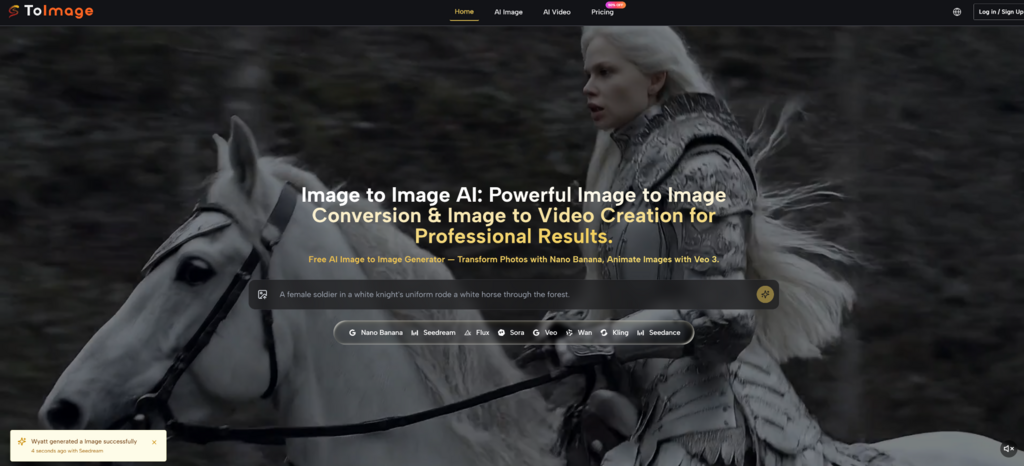

Most visual tools promise speed, but speed alone does not solve the real problem. Many creators can produce something quickly, yet still struggle to keep a character consistent, preserve a useful composition, or move from rough inspiration to a usable asset without starting over. That is why Image to Image feels more important than it may first appear. It does not ask you to generate from a blank page. It lets you begin with an existing visual idea and then push it in a more deliberate direction.

This matters because creative work often breaks down at the moment between intention and revision. You may already have a photo, a draft render, a product shot, or a sketch that contains the right structure, but not the right mood, finish, or style. In my view, the value of this kind of workflow is not just that it makes pictures look different. It changes how you make decisions. Instead of describing everything from scratch, you guide transformation from something concrete.

That shift sounds small, but it affects almost every stage of visual production. It changes how you brief, how you iterate, and how much control you feel you still have after AI enters the process.

Why Beginning With An Existing Image Feels Different

When people first think about generative visuals, they often imagine text as the main input. You type a description, the system interprets it, and the result appears. That can be exciting, but it also leaves a lot of room for drift. The model may understand the atmosphere but miss the composition. It may get the subject right but reshape the face, clothing, or setting in unexpected ways.

Starting from an image changes the balance. The source image already contains structure. It gives the system boundaries. In practice, that usually means better continuity. A pose can remain recognizable. A scene can stay anchored. A product can keep its silhouette while the surrounding style changes. This makes the workflow especially useful when you do not want endless novelty. You want directed change.

That is the deeper appeal here. Image transformation is less about replacing your judgment and more about extending it. You are not saying, “surprise me with anything.” You are saying, “take this and move it further.”

How The Core Transformation Process Actually Works

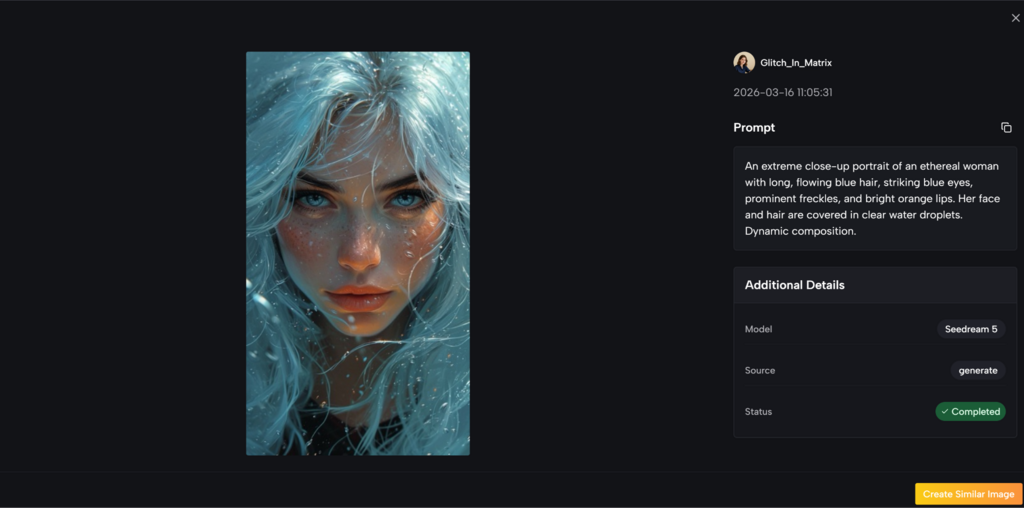

Image to Image AI visible workflow is straightforward, but the creative logic behind it is worth understanding. At a practical level, the process begins with an uploaded image, then combines that image with a prompt and a model choice. From there, the system generates a revised result that reflects both the original visual context and the new instruction.

The Source Image Sets Structural Boundaries

An existing image acts as a visual anchor. It contains relationships that are hard to describe cleanly in words alone: spacing, perspective, gesture, object placement, facial balance, lighting clues, and background rhythm. In my observation, that is why image-based generation often feels more stable than prompt-only generation. The system has less ambiguity to resolve.

This does not mean everything is locked. It means the starting point is more defined. That definition is what gives transformation workflows their sense of control.

The Prompt Describes Direction Rather Than Total Creation

With this kind of process, the prompt is not responsible for inventing the entire scene. Its role is more targeted. It tells the system what to change, what mood to pursue, or what visual language to apply. That may include style cues, material cues, atmosphere, tone, or contextual adjustments.

Because of that, prompts can become more practical. You are often not writing a full cinematic description. You are describing movement away from the original state.

This Makes Revision More Natural Than Recreation

That is an important difference. Recreation tends to be fragile. A small wording change can produce a very different result. Revision tends to be steadier. You keep more of the original logic while changing selected aspects of it.

The Model Choice Determines What Kind Of Change Emerges

The platform does not treat all models as interchangeable. Some are presented as stronger for realism and image fidelity. Some appear better suited to fast iteration. Others are more useful when you want context-aware edits or more targeted changes. That separation is meaningful. In creative work, speed, realism, and control are rarely identical goals.

What The Real Workflow Looks Like In Practice

One reason this kind of tool is approachable is that the official usage flow is relatively short. It does not require a long setup chain or a deeply technical preparation process.

Step One Upload A Base Image

The first step is to upload the image you want to work from. This image becomes the visual foundation for the result, not merely a loose reference. It holds the composition and gives the system something concrete to interpret.

Step Two Describe The Intended Change

Next, you enter a prompt that explains what kind of transformation you want. This could involve style redirection, atmosphere changes, subject refinement, or a broader visual shift. The key point is that the prompt works in relation to the uploaded image rather than in isolation.

Step Three Choose The Most Suitable Model

After that, you choose a model according to the kind of output you want. In my view, this is one of the more practical design choices. It acknowledges that not every task needs the same engine. A fast concept pass and a polished visual are not the same job.

Step Four Generate And Refine

Once the result is generated, the process becomes iterative. You compare what changed, decide what held up well, and adjust from there. This stage is where the workflow becomes genuinely useful. Good image transformation is rarely about one perfect attempt. It is about reaching the right version through controlled rounds.

How Different Models Affect Creative Outcomes

The availability of multiple models is not just a catalog feature. It changes how a creator thinks about the task itself. When the platform separates models by strength, it encourages a more intentional workflow.

A realism-oriented model may be better when you need detailed texture, more natural lighting, or an output that feels closer to photography. A faster model may be better when you are still exploring options and do not want to spend too much time on each pass. A context-aware editing model becomes more valuable when the goal is not to remake the whole image, but to revise selected parts while leaving the rest intact.

That distinction matters because many creators do not actually want “the best model” in the abstract. They want the right model for the current stage. Early ideation, client variation, campaign adaptation, and final delivery each favor different tradeoffs.

Where This Workflow Becomes Most Useful

The clearest use cases are not always the most dramatic ones. In fact, the value often shows up in ordinary production problems.

Marketing teams may want several visual directions from a single product shot without reshooting the campaign. Designers may want to test styles on top of an established composition. Social media creators may want to preserve a recognizable subject while refreshing presentation across formats. Brand teams may need variety without sacrificing identity.

In each of these cases, the real advantage is continuity. You are not endlessly searching for a new image that happens to feel close enough. You are evolving the one you already have.

A Simple Comparison Of What It Helps Most

| Creative Need | Why Image-Based Transformation Helps | What It Still Depends On |

| Keeping composition stable | The source image preserves structure | The prompt must not contradict the image too heavily |

| Maintaining visual identity | Existing subject details provide continuity | Multiple tries may still be needed for precision |

| Exploring style variation | One base image can be reinterpreted in several ways | Model choice strongly affects the result |

| Improving workflow speed | You revise instead of restarting from scratch | Faster output does not always mean best output |

| Producing usable assets | The process is more directed than blank-slate generation | Final polish may still require iteration |

The table makes the main point clear: the strength of this workflow is not pure novelty. It is guided variation.

What Users Should Stay Realistic About

This kind of system can feel smoother than starting from text alone, but it is still not magic. Results remain sensitive to prompt quality, model selection, and the suitability of the source image itself.

Better Inputs Usually Lead To Better Results

A cluttered or weak source image gives the system less coherent structure to build on. Likewise, a vague instruction can produce a vague transformation. In my experience, the best outcomes usually come from clear intent rather than long prompts.

Iteration Is Still Part Of The Process

Even when the overall direction is strong, the first generation may not fully match what you want. Sometimes the style lands but the details drift. Sometimes the composition stays stable but the emotional tone does not. That is normal. The point is not that iteration disappears. The point is that iteration becomes more productive.

Control Improves, But Absolute Precision Is Not Guaranteed

The workflow offers more consistency than many blank-slate approaches, yet there is still an interpretive layer. That means users benefit most when they treat the tool as a guided creative partner rather than a deterministic editor.

Why This Matters Beyond A Single Tool

What interests me most is not only the feature set itself, but what it suggests about the broader direction of creative software. The industry increasingly seems to be moving away from the idea that AI must begin with nothing. More systems are being built around transformation, reference, continuity, and selective control.

That makes sense. Most real creative work does not begin from emptiness. It begins from drafts, fragments, references, mistakes, half-finished assets, and visuals that are already close but not quite right. A good transformation workflow respects that reality.

For that reason, the significance of image-based creation is larger than a single category label. It points toward a more mature relationship between creators and generative systems. Instead of choosing between manual editing and total automation, users get something in between: a way to preserve intention while still gaining speed.

That middle ground is where this workflow feels most convincing. It does not replace visual judgment. It gives visual judgment a more efficient starting point.